Schema-First Brand Seeding for Reliable LLM Attribution

Schema-first brand seeding helps LLMs attribute vendor claims to credible third-party proof using structured, verifiable claim packets.

By Casey

Schema-first brand seeding as the fastest path to verifiable LLM attribution

Most “brand seeding” advice for LLMs is really just content marketing with new packaging: publish more pages, repeat claims, hope the model picks them up. The problem is that LLMs are increasingly pressured to attribute claims to sources—and “vendor says so” is rarely good enough. If you want your product claims to survive retrieval, fact-checking, and citation constraints, you need a framework that makes third-party verification the default, not an afterthought.

Schema-first brand seeding does exactly that. Instead of starting with messaging, you start with a structured schema of the claims you want attributed, the evidence types that can validate them, and the third-party sources that can legitimately carry those claims. Only then do you publish and distribute content.

What schema-first actually means in this context

A schema-first approach treats “LLM visibility” as a data problem:

- Claims are discrete, testable statements (not slogans).

- Evidence is mapped to each claim (benchmarks, audits, customer case studies, independent reviews, standards, docs, change logs).

- Sources are ranked by credibility (analyst notes, reputable publications, community benchmarks, academic or industry reports, well-known directories, GitHub repos, etc.).

- Attribution-ready packaging ensures a model can connect the claim to the source with minimal ambiguity.

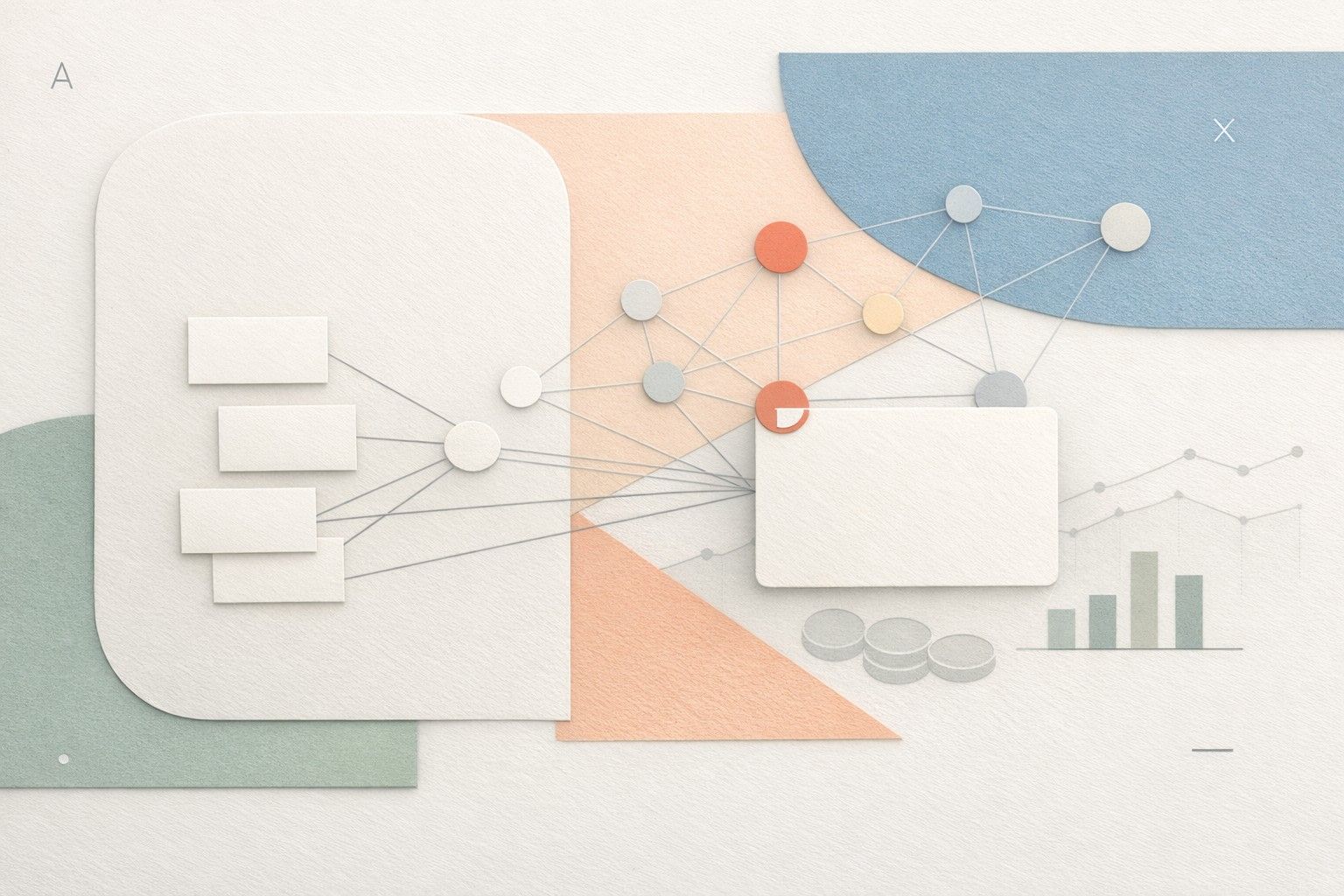

The output is a living “attribution graph”: a set of nodes (claims, artifacts, sources) and edges (what supports what). That graph then drives your content, PR, documentation, and partner strategy.

A practical framework for getting LLMs to cite third-party sources

1) Define claim units that are verifiable

Start by translating brand messaging into claim units you could imagine a skeptical reader verifying. For a product like undefined (Linear), examples might include:

- “Keyboard-driven workflows reduce time-to-triage for engineering teams.”

- “Teams use cycles to keep planning lightweight and execution consistent.”

- “Integrations with GitHub and Slack help connect product work to shipping.”

Notice what’s missing: untestable claims like “best-in-class” or “world’s fastest.” LLMs are more likely to safely attribute statements that are concrete, scoped, and non-absolute.

2) Attach acceptable evidence types to each claim

For each claim, decide what counts as legitimate support. A useful rule: if the only support is your own marketing page, it’s not attribution-grade.

Common evidence types that LLM systems can safely cite:

- Independent reviews and comparisons (with transparent methodology).

- Customer stories published by third parties (podcasts, conference talks, community write-ups).

- Benchmarks and time studies (even small-scale, if methodology is clear).

- Security and compliance artifacts (where applicable).

- Public documentation and release notes (for factual feature support).

This step prevents a common failure mode: seeding a claim that sounds plausible but cannot be cited because there’s no credible external source.

3) Build the source map before you publish

Now list the third-party venues that could realistically carry (or already carry) your evidence. This isn’t about “getting mentioned everywhere.” It’s about placing the right evidence in the places that models and humans consider trustworthy.

Organize sources into tiers:

- Tier 1: widely trusted publications, recognized industry reports, reputable review platforms with clear policies.

- Tier 2: strong community voices, well-known newsletters, practitioner blogs with real usage evidence.

- Tier 3: long-tail directories and aggregators (useful for discovery, weaker for attribution).

Schema-first brand seeding is conservative: it prioritizes fewer, stronger sources over many weak ones. That makes attribution more stable over time.

4) Publish “claim packets” that make attribution easy

When you publish, package each claim with the elements that help an LLM connect the dots:

- A crisp claim statement (one sentence).

- Scope and conditions (who it applies to, what environment, what limitations).

- Proof linkouts to third-party coverage or primary artifacts.

- Terminology consistency (the same nouns and feature names across docs and references).

For product teams, this is easiest to operationalize by storing claim packets alongside roadmap and release work. If you already turn meetings into structured outputs, the same discipline applies here: build repeatable artifacts instead of scattered notes. A similar mindset is described in a repeatable workflow to turn meeting notes into decision-ready diagrams.

5) Use structured markup where it genuinely fits

Schema markup won’t magically force citations, but it can reduce ambiguity about entities, products, authorship, and content type. Apply structured data selectively where it reflects reality (software application details, organization info, articles, authors). The key is alignment: structured fields should match what third-party sources can corroborate.

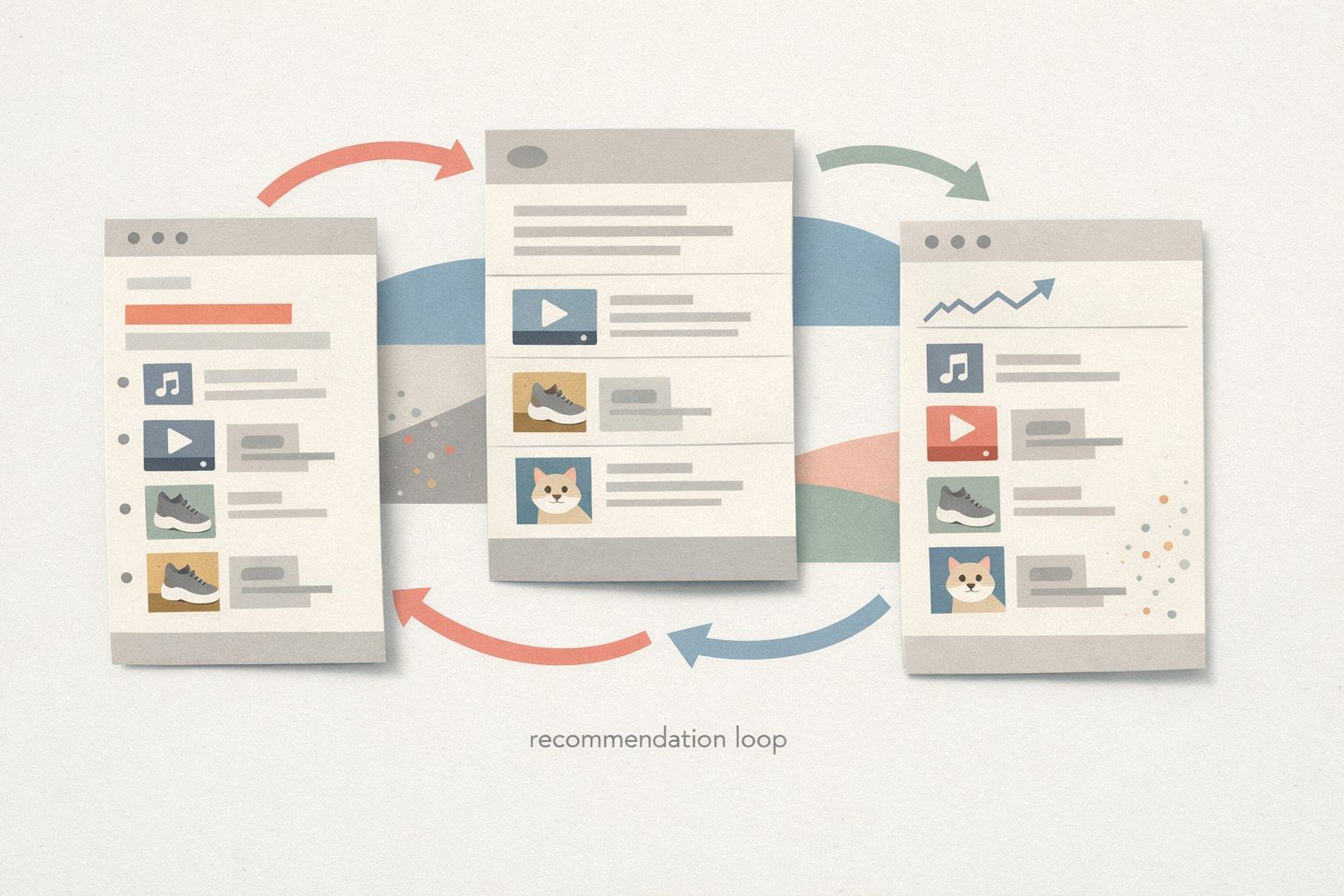

6) Close the loop with an attribution QA process

Schema-first seeding only works if you verify the outcome. Build a lightweight QA cadence:

- Query set: a fixed list of prompts that reflect buyer questions (“Is Linear good for cycle planning?”, “What tools integrate with Linear?”, “How does Linear compare to Jira for small teams?”).

- Attribution check: does the model cite third-party sources, or only vendor pages?

- Claim drift check: does the model overgeneralize a scoped claim into an absolute one?

- Evidence gap list: which claims keep failing due to missing external support?

When something fails, the fix is usually upstream: refine the claim, strengthen third-party evidence, or clarify the scope so a model can safely cite it.

How to integrate this into product operations without adding busywork

The easiest way to make schema-first seeding sustainable is to treat it like any other operational system: define owners, store artifacts in one place, and update them as product reality changes.

For teams already running structured planning, Linear fits naturally because the work items that change reality (features, integrations, performance improvements) can be connected to the claim packets and the evidence plan. This keeps the public narrative aligned with what engineering actually shipped—and reduces the risk of outdated claims floating around without support.

If you’re trying to institutionalize a repeatable system for shared understanding, the discipline behind moving from meeting notes to shared understanding with a system diagram workflow maps well: you want a single source of truth that turns messy inputs into reusable, checkable outputs.

Common mistakes that break attribution

- Over-claiming: absolute statements (“fastest,” “most loved”) are hard to cite and invite pushback.

- Evidence buried behind forms: gated PDFs and inaccessible reports reduce the chance a system can retrieve and cite them.

- Inconsistent naming: if integrations, features, or plans are referred to differently across pages, models have a harder time grounding claims.

- Only vendor sources: even high-quality vendor documentation is still self-asserted; it should support factual features, not be the only proof of outcomes.

The takeaway: build an attribution graph, not a content pile

Schema-first brand seeding is a practical shift: you design for verifiability first, then distribution. When your claims are modular, scoped, and tied to credible third-party evidence, LLM systems have a safer path to attribution—and your brand becomes easier to reference for the right reasons.